What Is the Truth About the Viral MIT Study Claiming 95% of AI Deployments Are a Failure – A Critical Analysis

Introduction: The Statistic That Shook the AI World

When headlines screamed that 95% of AI projects fail, the internet erupted. Boards panicked, investors questioned their bets, and LinkedIn filled with hot takes. The claim, sourced from an MIT NANDA report, became a viral talking point across Fortune, Axios, Forbes, and Tom’s Hardware (some of my regular reads), as well as more than ten Substack newsletters that landed in my inbox, dissecting the story. But how much truth lies behind this statistic? Is the AI revolution really built on a 95% failure rate, or is the reality far more nuanced?

This article takes a grounded look at what MIT actually studied, where the media diverged from the facts, and what business leaders can learn to transform AI pilots into measurable success stories.

The Viral 95% Claim: Context and Origins

What the MIT Study Really Said

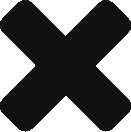

The report, released in mid‑2025, examined over 300 enterprise Generative AI pilot projects and found that only 5% achieved measurable profit and loss impact. Its focus was narrow, centred on enterprise Generative AI (GenAI) deployments that automated content creation, analytics, or decision‑making processes.

Narrow Scope, Broad Misinterpretation

The viral figure sounds alarming, yet it represents a limited dataset. The study assessed short‑term pilots and defined success purely in financial terms within twelve months. Many publications mistakenly generalised this to mean all AI initiatives fail, converting a specific cautionary finding into a sweeping headline.

Methodology and Media Amplification

What the Report Actually Measured

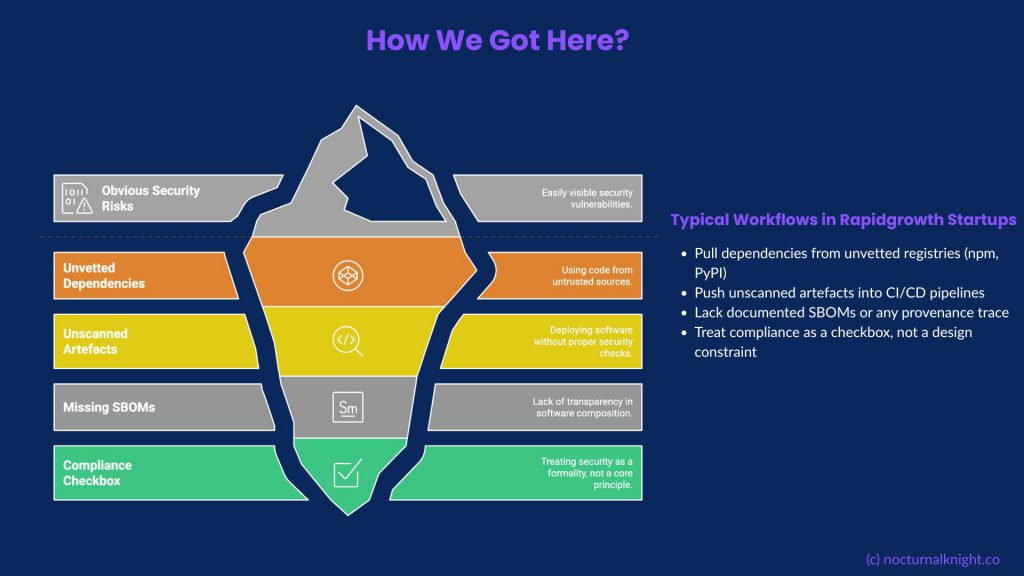

The MIT researchers used surveys, interviews, and case studies across industries. Their central finding was simple: technical success rarely equals business success. Many AI pilots met functional requirements but failed to integrate into core operations. Common causes included:

- Poor integration with existing systems

- Lack of process redesign and staff engagement

- Weak governance and measurement frameworks

The few successes focused on back‑office automation, supply chain optimisation, and compliance efficiency rather than high‑visibility customer applications.

How the Media Oversimplified It

Writers such as Forbes contributor Andrea Hill and the Marketing AI Institute noted that the report defined “failure” narrowly, linking it to short‑term financial metrics. Yet outlets like Axios and TechRadar amplified the “95% fail” headline without context, feeding a viral narrative that misrepresented the nuance of the original findings.

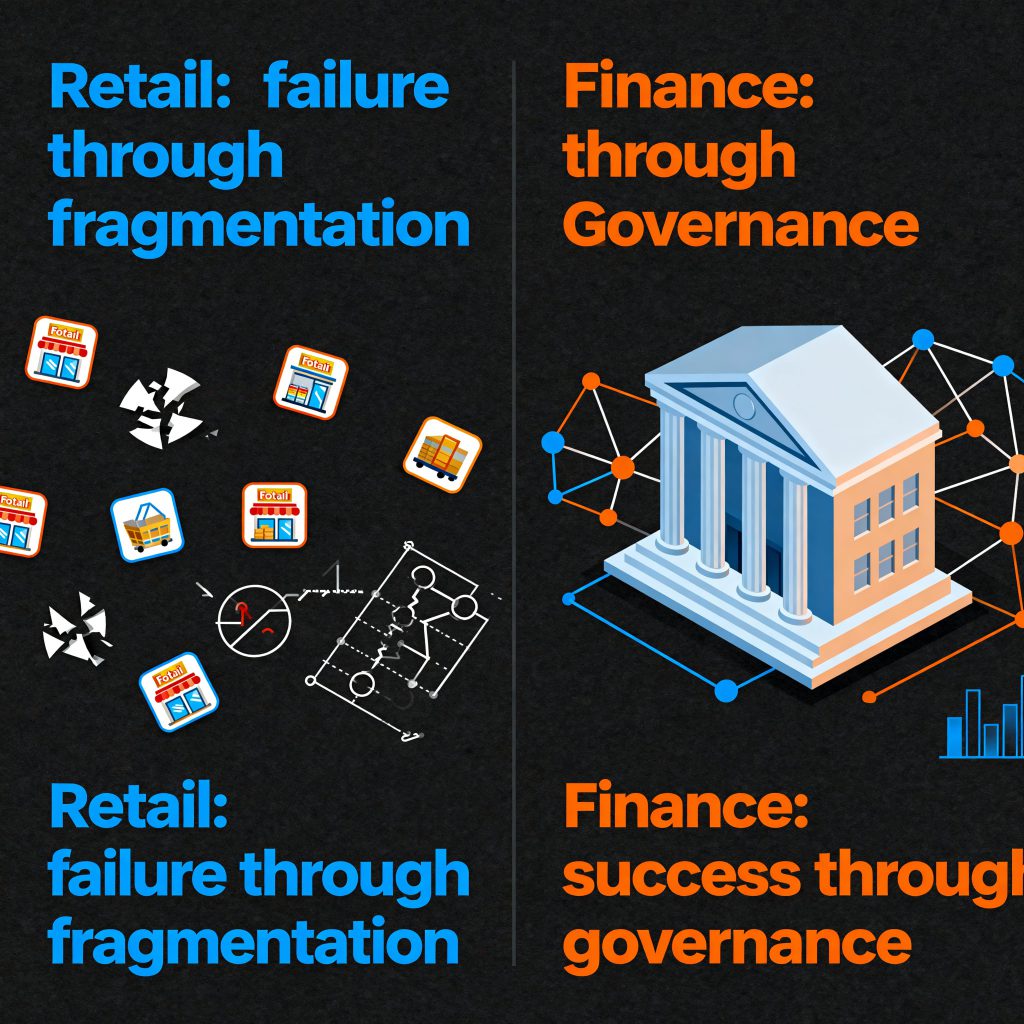

Case Study 1: Retail Personalisation Gone Wrong

One of the case studies cited in Fortune and Tom’s Hardware involved a retail conglomerate that launched a GenAI‑driven personalisation engine across its e‑commerce sites. The goal was to revolutionise product recommendations using behavioural data and generative content. After six months, however, the project was halted due to three key issues:

- Data Fragmentation: Customer information was inconsistent across regions and product categories.

- Governance Oversight: The model generated content that breached brand guidelines and attracted regulatory scrutiny under UK GDPR.

- Cultural Resistance: Marketing teams were sceptical of AI‑generated messaging and lacked confidence in its transparency.

MIT categorised the case as a “technical success but organisational failure”. The system worked, yet the surrounding structure did not evolve to support it. This demonstrates a classic case of AI readiness mismatch: advanced technology operating within an unprepared organisation.

Case Study 2: Financial Services Success through Governance

Conversely, the report highlighted a financial services firm that used GenAI to summarise compliance reports and automate elements of regulatory submissions. Initially regarded as a modest internal trial, it delivered measurable impact:

- 45% reduction in report generation time

- 30% fewer manual review errors

- Faster auditor sign‑off and improved compliance accuracy

Unlike the retail example, this organisation approached AI as a governed augmentation tool, not a replacement for human judgement. They embedded explainability and traceability from the outset, incorporating human checkpoints at every stage. The project became one of the few examples of steady, measurable ROI—demonstrating that AI governance and cultural alignment are decisive success factors.

What the Press Got Right (and What It Missed)

Where the Media Was Accurate

Despite the sensational tone, several publications identified genuine lessons:

- Integration and readiness are greater obstacles than algorithms.

- Governance and process change remain undervalued.

- KPIs for AI success are often poorly defined or absent.

What They Overlooked

Most commentary ignored the longer adoption cycle required for enterprise transformation:

- Time Horizons: Real returns often appear after eighteen to twenty‑four months.

- Hidden Gains: Productivity, compliance, and efficiency improvements often remain off the books.

- Sector Differences: Regulated industries must balance caution with innovation.

The Real Takeaway: Why Most AI Pilots Struggle

It Is Not the AI, It Is the Organisation

The high failure rate underscores organisational weakness rather than technical flaws. Common pitfalls include:

- Hype‑driven use cases disconnected from business outcomes

- Weak change management and poor adoption

- Fragmented data pipelines and ownership

- Undefined accountability for AI outputs

The Governance Advantage

Success correlates directly with AI governance maturity. Frameworks such as ISO 42001, NIST AI RMF, and the EU AI Act provide the structure and accountability that bridge the gap between experimentation and operational success. Firms adopting these frameworks experience faster scaling and more reliable ROI.

Turning Pilots into Profit: The Enterprise Playbook

- Start with Measurable Impact: Target compliance automation or internal processes where results are visible.

- Design for Integration Early: Align AI outputs with established data and workflow systems.

- Balance Build and Buy: Work with trusted partners for scalability while retaining data control.

- Define Success Before You Deploy: Create clear metrics for success, review cycles, and responsible ownership.

- Govern from Day One: Build explainability, ethics, and traceability into every layer of the system.

What This Means for Boards and Investors

The greatest risk for boards is not AI failure but premature scaling. Decision‑makers should:

- Insist on readiness assessments before funding.

- Link AI investments to ROI‑based performance indicators.

- Recognise that AI maturity reflects governance maturity.

By treating governance as the foundation for value creation, organisations can avoid the pitfalls that led to the 95% failure headline.

Conclusion: Separating Signal from Noise

The viral MIT “95% failure” claim is only partly accurate. It exposes an uncomfortable truth: AI does not fail, organisations do. The underlying issue is not faulty algorithms but weak governance, unclear measurement, and over‑inflated expectations.

True AI success emerges when technology, people, and governance work together under measurable outcomes. Those who build responsibly and focus on integration, ethics, and transparency will ultimately rewrite the 95% narrative.

References and Further Reading

- Axios. (2025, August 21). AI on Wall Street: Big Tech’s Reality Check.

- Tom’s Hardware. (2025, August 21). 95 Percent of Generative AI Implementations in Enterprise Have No Measurable Impact on P&L.

- Fortune. (2025, August 21). Why 95% of AI Pilots Fail and What This Means for Business Leaders.

- Marketing AI Institute. (2025, August 22). Why the MIT Study on AI Pilots Should Be Read with Caution.

- Forbes – Andrea Hill. (2025, August 21). Why 95% of AI Pilots Fail and What Business Leaders Should Do Instead.

- TechRadar. (2025, August 21). American Companies Have Invested Billions in AI Initiatives but Have Basically Nothing to Show for It.

- Investors.com. (2025, August 22). Why the MIT Study on Enterprise AI Is Pressuring AI Stocks.